|

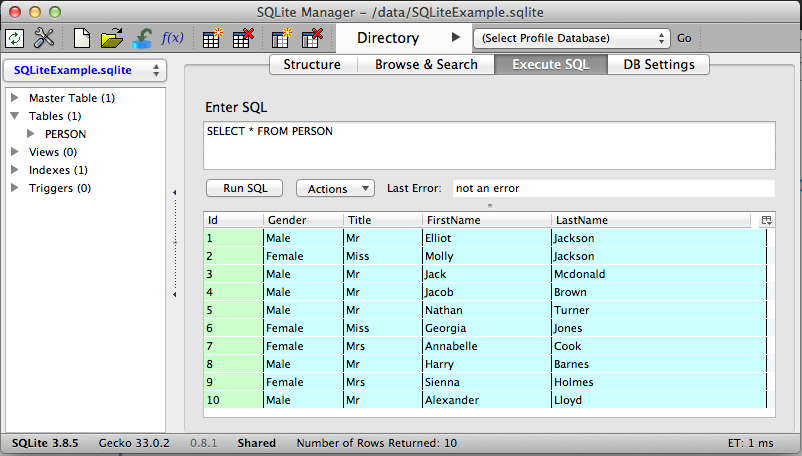

The INSERT will merge myfile into users with a single command, so sqlite can optimize the operation. ON CONFLICT (ID) DO UPDATE SET username = ername SELECT ID, Name from myfile WHERE true - WHERE true needed to avoid confusion with following ON Then, you can use a single query to update the main table: PRAGMA temp_store = MEMORY ĬREATE TEMPORARY TABLE myfile (ID INTEGER PRIMARY KEY, Name) Temporary tables can be stored in memory for maximum performance and the import can be done sequentially without having to check if the record exists or is a new one. You could import your data from your file and insert it in a temporary table. Are there other ways besides sorting it and only then writing to the database? How to improve performance? Especially, if I have a non-sorted list of ID-Name pairs. This means that if you update the whole table, you could need to read all pages of the table 100 times.

If you update the table in random ID order, every update will most probably need to update a row stored on a different page than the previous one, so sqlite needs to read and write a different page every time. Even if you updated all the rows of the table, every page would be read and written to disk only once. If you update the table in increasing ID order, since a single page con contain multiple rows, you are accessing the same page for as much as 100 updates (in the example of 100 rows per page), then every 100 updates you switch to another page and modify it for another number of updates. If you update repeatedly the same row, the page is already cached in memory and it will not be flushed to disk until the end of transaction, so there is no need to read or write anything to disk. This means that for every UPDATE sqlite has to find the page that contains the row with the specified ID, read it, modify it and then write it to disk. I mean in real sutiations IDs are near to random and as I know the performance is not as bad as mine.Įven if they are enclosed in a single transaction, every UPDATE is executed singularly. Why this happens? I think it may be somehow related to caching, but I don't know if it's normal. This means that the table is stored in pages on disk in ID order and IDs of similar values are stored in the same page. Default size of page is 4096 bytes, so if each row is 40 bytes of data then a page can contain up to 100 rows.Īlso, if ID is an integer primary key, then ID is used as the rowid. Rows are stored in pages within the database and sqlite will always read or write whole pages. How to improve performance? Especially, if I have a non-sorted list of ID-Name pairs.I mean in real sutiations IDs are near to random and as I know the performance is not as bad as mine. Why this happens? I think it may be somehow related to caching, but I don't know if it's normal.I also noticed the bigger range of IDs I choose the slower it becomes, but IDs from that range are always exist in the table. Then the results become disappointing: Speed: 514.18/sec If I use a random ID c.execute('UPDATE users SET username = ? WHERE id = ?', ('random', random.randint(0, 1000000000)))

Where inc is increased by 1 after every UPDATE, then the results would be: Speed: 233977.17/secīut. With c.execute('UPDATE users SET username = ? WHERE id = ?', ('random', inc)) If I replace c.execute('UPDATE users SET username = ? WHERE id = ?', ('random', 555)) In the example above the ID is constant and equals to 555 (the value doesn't matter, the speed is the same also for big values of ID).įor this example the speed is (UPDATEs per second): Speed: 376665.88/sec The value of username doesn't affect on performance, so let it be always random. The script for experiments are: import sqlite3Ĭonn = nnect('database.sqlite')Ĭ.execute('UPDATE users SET username = ? WHERE id = ?', ('random', 555)) Then I started experimenting with the script and found that UPDATEs are much faster when IDs are a constant or incrementing for example. ID is a primary key, so it must be indexed automatically and it should not slow down UPDATEs, right? Much slower than it has to be for UPDATE (not even INSERT). I realized that it worked extremely slow. I've written a simple python script for this purpose.

Of course, if there is such ID in the table (do not insert a new record if there is no such user with this ID, just UPDATE existing one). I need to write all the names from the file into the database using corresponding IDs. I have a large database and a file with ID-Name pairs in each line, where ID corresponds to the primary key of the table in the database.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed